Learn how to scrape Amazon proxies effectively. Covers detection evasion, proxy selection, request patterns, and scaling strategies for product data.

Why Amazon Is One of the Hardest Sites to Scrape

Amazon's defences operate on multiple layers simultaneously. At the network level, the platform maintains an extensive IP reputation database that scores every incoming address based on historical behaviour, ISP classification, and geographic consistency. Datacenter IPs are flagged almost immediately because Amazon knows residential customers don't browse from AWS or Google Cloud ranges. Even legitimate datacenter traffic gets scrutinised with extra challenges.

At the session level, Amazon tracks behavioural signals across requests. It monitors navigation sequences, time between page loads, mouse movement patterns on interactive elements, and whether requests follow patterns consistent with human browsing. A script that hits product pages in rapid succession without ever visiting a category page or search results page is trivially detectable.

Amazon also employs sophisticated fingerprinting that goes beyond IP addresses. TLS handshake characteristics, HTTP/2 settings, JavaScript execution environment, and browser API responses all contribute to a connection fingerprint. When that fingerprint doesn't match any known browser profile, the request gets raised scrutiny, often resulting in CAPTCHA challenges or soft blocks where the page loads but critical data like pricing is omitted.

What Product Data Is Worth Collecting

The highest-value data points for most use cases:

- Product titles and descriptions: Essential for catalogue matching, keyword analysis, and tracking how competitors position their listings over time.

- Pricing data: Current price, list price, deal price, Subscribe & Save price, and price per unit. Amazon frequently shows different prices based on seller, fulfillment method, and customer segment.

- Buy Box status: Which seller currently holds the Buy Box is critical competitive intelligence. Track Buy Box ownership over time to understand rotation patterns and pricing thresholds that win it.

- Best Sellers Rank (BSR): The single best proxy for sales velocity. Track BSR daily to estimate competitor sales volume and identify trending products in your category.

- Review data: Star rating, review count, and recent review sentiment reveal product quality perception and customer satisfaction trends.

- Stock and fulfillment signals: "In Stock," "Only X left," "Available from these sellers," and delivery date estimates indicate inventory levels and supply chain health.

- Seller information: Seller name, rating, fulfillment method (FBA vs FBM), and number of sellers on a listing.

Map each data point to a specific business decision it informs. If a data point doesn't drive a decision, don't scrape it. Every unnecessary field increases your bandwidth consumption, parsing complexity, and detection surface.

How Amazon Detects and Blocks Scrapers

Rate limiting is the first layer. Amazon tracks requests per IP per time window and applies progressively stricter responses: first serving degraded content (missing prices or reviews), then CAPTCHA challenges, then temporary blocks, and finally long-term IP bans. The thresholds aren't public, but practical experience shows that exceeding 8 to 12 requests per minute from a single IP consistently triggers escalation.

Session integrity checks form the second layer. Amazon assigns session tokens through cookies and validates that subsequent requests carry valid, unexpired tokens from the same IP that received them. Session tokens that appear on a different IP, or requests without valid session cookies, receive heightened scrutiny.

Behavioural analysis is the third and most sophisticated layer. Amazon's system builds a behavioural profile of each session and compares it against models of legitimate browsing. Signals that flag automated access include: perfectly consistent request intervals, accessing only product detail pages without search or category navigation, requesting pages in a systematic URL pattern, and missing referrer headers that a browser would naturally include.

CAPTCHA deployment is the enforcement mechanism. Amazon primarily uses its own CAPTCHA system that presents image recognition challenges. Once triggered, CAPTCHAs persist for the remainder of the session and often extend to subsequent sessions from the same IP. A high CAPTCHA rate is a clear signal that your scraping approach needs fundamental changes, not just more proxy bandwidth.

Proxy Requirements for Amazon Scraping

Residential proxies work because they carry IP addresses assigned by consumer ISPs: Comcast, AT&T, Vodafone, BT, and thousands of others. These addresses are indistinguishable from genuine Amazon shoppers at the network level. Amazon cannot aggressively block residential IPs without risking false positives against real customers, which gives your scraping traffic room to operate.

Geographic targeting is equally critical. Amazon operates separate marketplaces with independent pricing, inventory, and seller ecosystems. To get accurate data from amazon.com, you need US-based residential proxies. For amazon.co.uk, use UK proxies. For amazon.de, German proxies. A request arriving at amazon.com from a German IP will work, but it may receive different content. Amazon sometimes shows different pricing, availability, or product suggestions based on the visitor's apparent location.

Pool size matters. For sustained Amazon scraping at any meaningful scale, you need access to thousands of unique residential IPs. If you're scraping 10,000 product pages per day at a safe rate of 6 to 8 requests per IP per session, that requires roughly 1,500 to 2,000 unique IPs per day with headroom for cooldown rotation. Databay's pool of 34M+ residential IPs across 200+ countries provides the depth needed to sustain Amazon operations long-term without exhausting clean addresses.

Request Patterns That Avoid Detection

Mimic real navigation flows. Real Amazon shoppers don't type product ASINs directly into the URL bar. They search, browse categories, click through results, and land on product pages through natural navigation. Structure your scraping sessions similarly: start with a search query or category page, extract product URLs from the results, then visit product pages with appropriate referrer headers pointing back to the search results page.

Randomise request timing. Never use fixed intervals between requests. A request every exactly 5.0 seconds is an unmistakable bot signature. Instead, sample delays from a distribution that mimics human reading time. A log-normal distribution with a median of 4 to 6 seconds and occasional longer pauses of 15 to 30 seconds (simulating a user reading a product description) works well in practice.

Avoid sequential access patterns. Don't scrape products in ASIN order, price order, or any other systematic sequence. Randomise your target list before each scraping session. If you're monitoring a fixed catalogue of 5,000 products, shuffle the list and scrape in random order each day.

Include non-target page requests. Sprinkle in requests to category pages, Amazon's homepage, or search results pages between product page requests. A session that visits 50 product pages without ever loading a single non-product page is suspicious. Aim for a ratio of roughly 1 non-product page per 5 to 8 product pages.

Handling Amazon's Dynamic Content

You have two approaches to handle this, each with distinct tradeoffs.

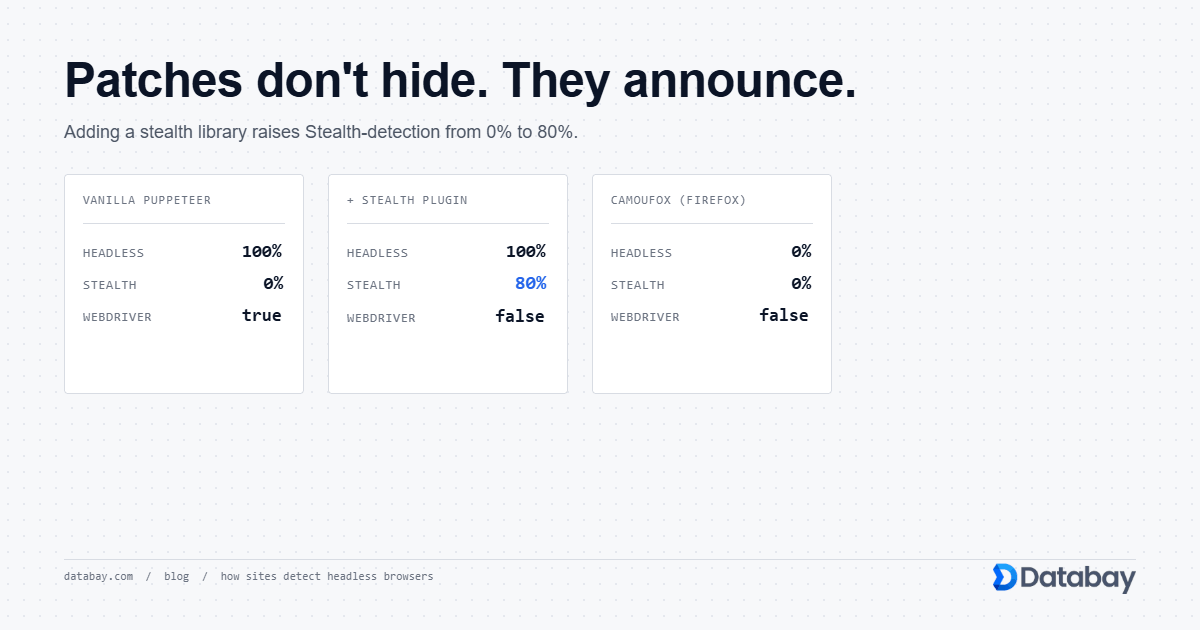

Headless browser rendering is the most reliable method. Tools like Playwright or Puppeteer load the page in a real browser environment, execute all JavaScript, and give you access to the fully rendered DOM. Every data point visible to a human shopper is available for extraction. The cost is resource consumption: each headless browser instance uses 100 to 300MB of RAM, and page load times of 3 to 8 seconds mean lower throughput per worker. When you scrape Amazon, proxies become more expensive under this approach because each request consumes more bandwidth loading images, CSS, fonts, and JavaScript bundles.

API interception is faster and cheaper when it works. Use your browser's network tab to identify the XHR and fetch requests Amazon makes after the initial page load. Many data points are delivered through internal API endpoints that return structured JSON. If you can replicate these API calls directly (with the correct headers, cookies, and authentication tokens) you skip the entire rendering step. The risk is that Amazon's internal APIs change without notice, and they may require session tokens that are difficult to generate outside a browser context.

The pragmatic approach combines both: use headless browser sessions to discover and validate API endpoints, then switch to direct HTTP requests for production-scale collection. Fall back to full rendering for pages where the API approach breaks.

API Alternatives Worth Considering

PA-API gives you access to product titles, descriptions, pricing, images, reviews summary, availability, and browse node categorisation. The data is clean, structured, and delivered in JSON format without any scraping, parsing, or proxy costs. For businesses that need product data to power comparison shopping, affiliate content, or catalogue enrichment, PA-API is the path of least resistance.

The limitations are real. Rate limits cap requests at 1 request per second (8,640 per day) for new associates, scaling up based on revenue generated through the affiliate program. This makes PA-API impractical for large-scale price monitoring or thorough catalogue tracking. You cannot access BSR data, detailed seller information, review text, or many of the competitive intelligence signals that make Amazon data valuable. Coverage is limited to the product catalogue without historical data or trend information.

For many teams, the right answer is a hybrid approach. Use PA-API for baseline product information where its data coverage is sufficient. Supplement with proxy-based scraping for the high-value data points PA-API doesn't expose: BSR tracking, Buy Box monitoring, detailed seller analytics, review sentiment analysis, and pricing history across multiple daily checkpoints. This cuts your scraping volume by handling the easy data through official channels, letting you focus proxy resources on the data that only scraping can provide.

Scaling Amazon Scraping Safely

Start with a conservative baseline. Begin at 1 to 2 requests per second across your entire operation, not per proxy. Monitor success rates (HTTP 200 responses with complete data) closely. If success rates stay above 95% for 48 hours, gradually increase throughput by 20 to 30%. If success rates drop below 90%, reduce volume immediately and investigate which detection signal you're triggering.

Distribute load across time. Unless you need real-time pricing data, spread your scraping across the full 24-hour cycle rather than concentrating it in bursts. Scraping 10,000 products at a steady rate of 7 per minute over 24 hours is far less detectable than scraping the same 10,000 products in a 3-hour window at 56 per minute. Amazon's detection systems specifically flag traffic spikes.

Implement per-ASIN cooldowns. After scraping a specific product page, don't request it again for at least 4 to 6 hours from the same IP. Maintain a mapping of which IPs have accessed which ASINs and enforce minimum intervals. Prevents the pattern where Amazon sees the same product requested repeatedly from the same proxy pool range.

Use separate proxy pools for separate tasks. Your product page scraping, search results collection, and review monitoring should use independent proxy pools. If one task triggers raised detection and its IPs get flagged, the contamination doesn't spread to your other data collection workflows. Databay's proxy management lets you segment traffic across different proxy endpoints precisely for this purpose.

Data Extraction and Storage Best Practices

Build your extractors with multiple fallback selectors for each data point. For pricing, Amazon may display the current price in different container elements depending on the product category, deal status, or layout variant. A solid price extractor checks 3 to 4 known selector paths and validates that the extracted value is a plausible price (numeric, within expected range for the product category). Log extraction failures at the field level so you can quickly identify when Amazon changes a page structure.

Store raw HTML alongside extracted data. Costs storage space but provides invaluable insurance. When an extraction bug produces incorrect data, you can re-parse the stored HTML with corrected logic rather than re-scraping the pages, saving both time and proxy bandwidth. Compress stored HTML with gzip to reduce the storage footprint by 80 to 90%.

For time-series data like pricing and BSR, use an append-only storage model. Never overwrite yesterday's price with today's price. Each observation should be a distinct record with a timestamp, source IP location, and extraction metadata. This historical dataset becomes increasingly valuable over time for trend analysis, seasonal pattern detection, and predictive modelling. A simple schema works: ASIN, data point name, value, timestamp, proxy country, and a confidence score indicating extraction reliability.

Legal Considerations for Amazon Scraping

The key legal distinction is between publicly available data and protected content. Product titles, prices, ratings, and availability information displayed on public product pages are visible to anyone with a web browser. No login, subscription, or agreement is required to view them. Courts in multiple jurisdictions have recognised that collecting publicly available information does not constitute unauthorised access, even when a site's ToS restricts automated collection. The hiQ Labs v. LinkedIn decision in the US established important precedent that scraping public data does not violate the Computer Fraud and Abuse Act.

Several categories of Amazon data carry higher legal risk. Copyrighted product descriptions and images belong to the sellers or manufacturers who created them. Reproducing this content at scale may create copyright liability. Customer review text is authored by individual users, and using it in certain commercial contexts may raise issues. Personal information about sellers or reviewers requires careful handling under privacy regulations like GDPR and CCPA.

Practical risk management for Amazon scraping:

- Limit collection to factual product data: prices, ratings, BSR, availability, and seller identifiers.

- Do not republish copyrighted descriptions or images without authorisation.

- Avoid collecting personal data about individuals unless you have a lawful basis.

- Keep records of what you collect, how you use it, and your data retention policies.

- Consult legal counsel before launching large-scale commercial operations based on scraped Amazon data.