A practical guide to ethical web scraping covering robots.txt, rate limiting, personal data, GDPR compliance, terms of service, and building sustainable scraping policies.

The Ethical Framework: Can vs. Should

Ethical web scraping starts with a simple principle: treat other people's infrastructure and content with the same respect you would want for your own. If you ran a website, you would not want a scraper consuming 40% of your server capacity, ignoring your robots.txt, and redistributing your content without attribution. That perspective should guide every technical decision.

This isn't about being soft or leaving value on the table. Ethical scraping is a strategic choice with concrete benefits. Sites that detect aggressive, boundary-violating scrapers respond by deploying stronger anti-bot measures, implementing CAPTCHAs, and blocking entire IP ranges. This arms race makes scraping harder and more expensive for everyone. Organisations that scrape responsibly build sustainable access to data sources that aggressive scrapers eventually lose access to entirely.

The framework has three pillars: respect the infrastructure (don't overload servers), respect the rules (follow robots.txt and rate limits), and respect the content (understand intellectual property implications). Each pillar has specific, actionable practices that the following sections will cover in detail.

robots.txt as a Social Contract

The file communicates clear intent. When a site operator writes Disallow: /api/ or Disallow: /user-profiles/, they are telling you that automated access to those paths is unwelcome. Ignoring that is equivalent to walking through a door marked Private. The door is unlocked, but the message is clear.

Practical implementation means:

- Check robots.txt before scraping any new domain. Parse the file programmatically and configure your scraper to respect Disallow directives. Libraries exist for every major language: Python's robotparser, Node's robots-parser

- Respect Crawl-delay directives. If a site specifies Crawl-delay: 5, wait at least 5 seconds between requests to that domain, even if your proxy pool could support much higher throughput. The directive is about server capacity, not IP detection

- Check for AI-specific directives. Since 2023, many sites have added directives targeting AI crawlers specifically (GPTBot, CCBot, Google-Extended). Respect these even if your scraper does not identify as one of these bots

- Re-check periodically. robots.txt policies change. A site that permitted scraping six months ago may have added restrictions. Re-fetch robots.txt at least monthly for active scraping targets

The legal weight of robots.txt varies by jurisdiction, but multiple courts have referenced robots.txt compliance (or non-compliance) as a factor in scraping-related cases. Respecting it strengthens your legal position.

Rate Limiting as Good Citizenship

A small e-commerce site running on a single server might handle 50-100 concurrent users comfortably. A scraper making 10 requests per second consumes 10% of that capacity. During peak traffic, those extra requests might be the difference between the site loading in 2 seconds versus 5 seconds for real customers. That's a tangible harm, and it's your scraper causing it.

Responsible rate limiting means:

- Start slow and measure. Begin with 1 request per 3-5 seconds for any new target domain. Monitor response times. If they increase compared to manual browsing, you are adding server load. Increase speed only after confirming the site handles it without degradation

- Respect the site's size. Major platforms like Amazon or Google can handle aggressive scraping without blinking. A small business website running on shared hosting cannot. Adjust your rate limits to the target's apparent infrastructure

- Scrape during off-peak hours. If your data collection does not need to happen during business hours, schedule it for nights and weekends when server load is typically lower. This is especially important for sites serving a single geographic region

- Use conditional requests. Send If-Modified-Since or If-None-Match headers to avoid re-downloading pages that have not changed. This reduces the load on the target server and your bandwidth consumption at the same time

The proxy temptation is real. A large proxy pool lets you distribute requests so no single IP triggers rate limits. But distributing 100 requests per second across 100 IPs still hits the server with 100 requests per second. Rate limiting should be per-domain, not per-IP.

Public Data vs. Private Data

Clearly public data includes information published for broad consumption with no access controls: published product prices, public company filings, government records, news articles on freely accessible sites, published academic papers, and publicly listed job postings. Scraping this data is generally ethical and legal, subject to rate limiting and robots.txt considerations.

Clearly private data includes information behind authentication barriers: user account details, private messages, purchase histories, medical records, and content on login-required platforms. Scraping this data by creating accounts, bypassing paywalls, or exploiting authentication vulnerabilities is unethical and often illegal.

The gray zone is where ethical judgment matters most. Social media profiles set to public are accessible but may contain personal information. Public reviews include opinions that individuals may not want aggregated. Forum posts were shared in a specific community context, not for mass data collection. Directory listings compile personal contact information that is technically public.

For gray-zone data, apply these tests:

- Reasonable expectations. Would the person who posted this content reasonably expect it to be collected at scale by an automated system? A blog post: probably yes. A support forum question: probably not

- Sensitivity. Does the data reveal sensitive information about identifiable individuals? Health discussions, political views, financial situations, and relationship details deserve extra caution regardless of their technical accessibility

- Purpose. What will you do with the data? Aggregating public prices for a comparison tool is very different from building profiles of individuals from their public posts

GDPR, Personal Data, and Global Privacy Requirements

The practical implications for ethical web scraping are significant:

- Lawful basis. GDPR requires a lawful basis for processing personal data. The most relevant bases for scraping are legitimate interest (you have a valid business reason that does not override the individual's rights) and consent (the individual agreed to the processing). Legitimate interest requires a documented balancing test: your interest weighed against the impact on individuals

- Data minimisation. Collect only the personal data you actually need for your stated purpose. If you need company information from LinkedIn, do not scrape profile photos, personal bios, and connection counts alongside it

- Storage limitation. Do not retain personal data longer than necessary. Define a retention policy before you start collecting and implement automatic deletion when the retention period expires

- Individual rights. Data subjects have the right to know what data you hold about them, request deletion, and object to processing. You need processes to handle these requests even for scraped data

GDPR applies to any organisation processing data about EU residents, regardless of where the organisation is based. California's CCPA, Brazil's LGPD, and similar regulations create comparable obligations in their jurisdictions. If your scraping targets include personal data from multiple countries, you need a compliance strategy that addresses the strictest applicable regulation.

Terms of Service: What They Mean and What They Do Not

ToS violations are not criminal offenses. The Computer Fraud and Abuse Act (CFAA) in the US has been interpreted to not cover ToS violations for accessing publicly available data. The Ninth Circuit's ruling in hiQ v. LinkedIn established that accessing public data does not violate the CFAA even if it violates the site's ToS. That does not make ToS irrelevant.

ToS violations can lead to civil liability. A website can sue for breach of contract (the ToS is a contract between you and the site), trespass to chattels (if your scraping caused server harm), or unfair competition (if you use scraped data to compete directly). These civil claims carry financial risk, especially for commercial scraping operations.

ToS vary enormously in scope. Some prohibit only scraping that harms the site (reasonable). Others claim ownership of all facts displayed on the site (likely unenforceable). Others prohibit any automated access whatsoever (common but rarely enforced against respectful scrapers). Read the actual ToS, not just the headline.

The ethical approach is to understand what the ToS says, assess whether your scraping activity falls within reasonable boundaries, and make an informed decision. If a site's ToS says you cannot scrape and you do it anyway, you should at least understand the risk you are accepting and ensure your scraping behaviour is respectful enough that the site would have little incentive to pursue action.

The Sustainability Argument Against Aggressive Scraping

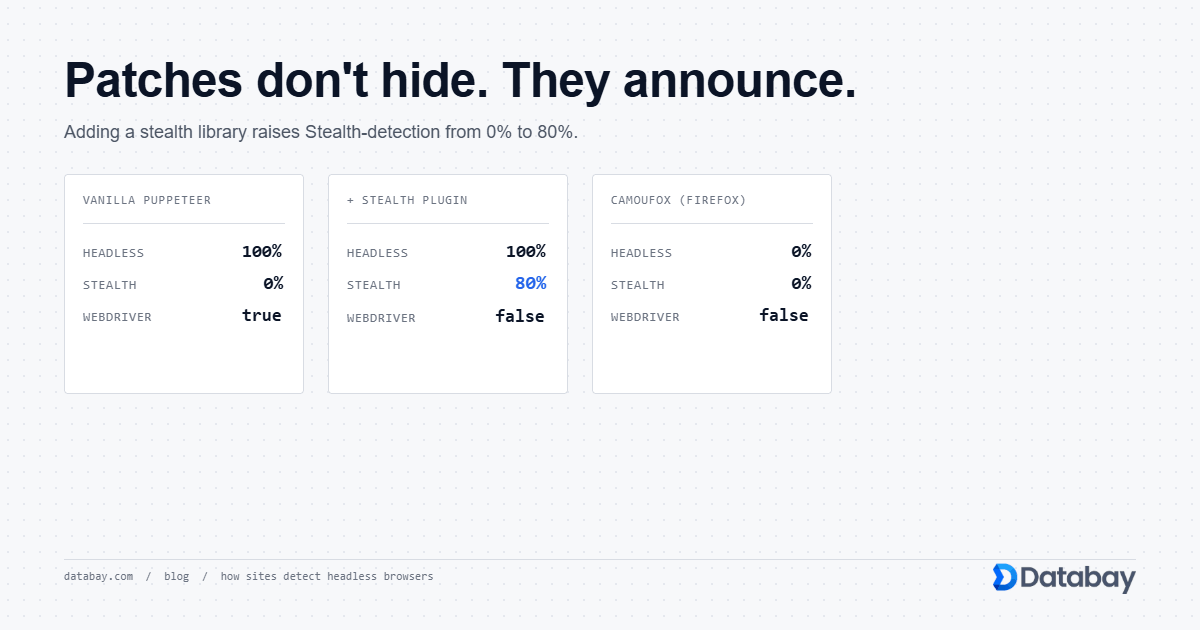

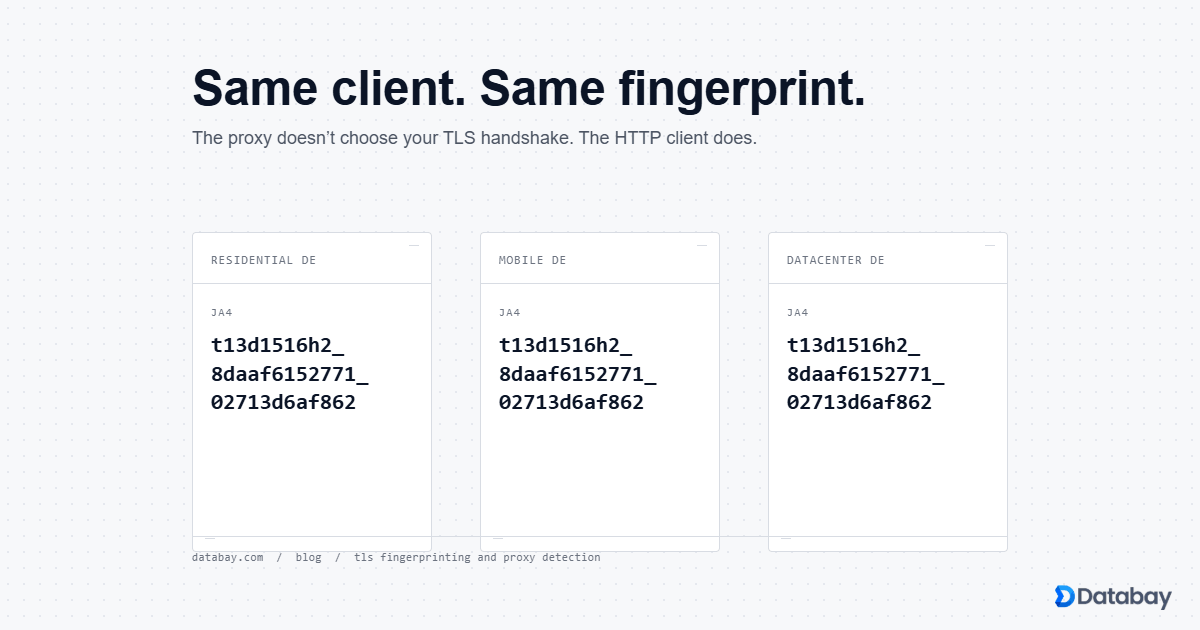

The cycle works like this. Aggressive scrapers hit a website. The website deploys Cloudflare, Akamai, or similar anti-bot protection. Scrapers evolve to bypass the protection using residential proxies, browser fingerprint spoofing, and CAPTCHA solvers. The anti-bot systems escalate to behavioural analysis, JavaScript challenges, and device fingerprinting. Scrapers respond with headless browsers simulating human behaviour. The anti-bot industry develops even more sophisticated detection. Each cycle makes scraping more expensive, more technically complex, and more likely to fail.

The organisations paying the price for this escalation aren't the aggressive scrapers who started it. They move on to the next exploit. The price is paid by every data-dependent business that needs reliable, long-term access to web data. The price is paid in higher proxy costs, more complex infrastructure, lower success rates, and more engineering time devoted to anti-detection rather than data quality.

Sustainable scraping treats web data access as a long-term resource to be managed, not a short-term opportunity to be exploited. Companies that scrape responsibly, within published boundaries, at reasonable rates, often find they can maintain consistent access for years to sites where aggressive scrapers lose access within weeks. The tortoise-and-hare dynamic applies directly: slow, steady, respectful scraping beats aggressive scraping over any time horizon longer than a few months.

Building Ethical Scraping Policies for Your Organisation

An effective scraping policy should address:

- Allowed data types. Define categories of data that are approved for collection (public prices, job listings, published content) and categories that are off-limits (personal data without lawful basis, login-required content, paywalled material)

- Rate limit standards. Set maximum request rates per domain based on estimated site capacity. Mandate Crawl-delay compliance. Require off-peak scheduling for high-volume collection

- robots.txt compliance. Make robots.txt compliance mandatory, with a documented exception process for specific business-critical cases that require management approval

- Proxy usage guidelines. Specify when residential versus datacenter proxies are appropriate. Prohibit using proxies specifically to circumvent access blocks that the site operator has intentionally deployed against your organisation

- Data retention. Define how long scraped data is stored, when it must be deleted, and how deletion is verified. This is especially critical for any data that may contain personal information

- Incident response. What happens when a scraper causes unintended harm (server overload, accidental personal data collection, ToS violation notice from a site operator)? Define escalation paths and response procedures

Review the policy quarterly and update it as laws, industry norms, and your scraping operations evolve. Assign ownership to a specific person or team who is responsible for policy compliance.

Communicating with Site Owners

Set a descriptive User-Agent. Instead of masquerading as Chrome or using a generic bot string, identify your scraper with a custom User-Agent that includes your organisation name and a contact URL. Example: DataCollector/1.0 (yourcompany.com/scraping-policy; [email protected]). This lets site operators see who is accessing their content and reach out if there are concerns.

Using an honest User-Agent feels risky. Will sites just block you? Some will. Most site operators are not opposed to all scraping. They are opposed to anonymous, aggressive, uncontrollable scraping. When they can identify you, they can contact you with specific requests (slow down, avoid certain paths, use their API instead) rather than deploying blanket anti-bot measures.

Publish a scraping policy page. Host a page on your website that explains what data you collect, how you use it, your rate limiting practices, and how site owners can request changes or exclusion. Link to this page from your User-Agent string. This demonstrates good faith and gives site operators a clear communication channel.

Respond to requests. If a site owner contacts you asking you to stop or modify your scraping, respond promptly and comply. That's both ethical and practical. A site owner who contacts you before blocking you is offering a more graceful outcome than one who goes directly to legal action.

The Business Case for Ethical Scraping

Lower infrastructure costs. Ethical scraping avoids the arms race that drives up proxy costs. When you are not fighting anti-bot systems, you need fewer retries, simpler proxy configurations, and less engineering time devoted to detection evasion. A team that respects rate limits and robots.txt can often use datacenter proxies where aggressive scrapers need expensive residential proxies.

Higher data reliability. Sustainable access to data sources means consistent, predictable data delivery to downstream systems. Aggressive scrapers face sudden access loss when sites block them, causing data gaps that disrupt business operations. Ethical scrapers build relationships that provide stable, long-term access.

Reduced legal risk. Every ToS violation, every robots.txt override, every personal data collection without lawful basis creates liability. As scraping-related litigation increases (and it is increasing significantly since 2023), organisations with documented ethical practices have a defensible position. Organisations without them face open-ended legal exposure.

Competitive moat. Counterintuitively, ethical constraints create competitive advantages. If you can build valuable data products within ethical boundaries while competitors rely on aggressive practices that are becoming legally risky, your business model is more durable. As regulation tightens and enforcement increases, ethically-built data assets become more valuable, not less.

The return on investment for ethical scraping practices isn't theoretical. It shows up in lower cloud bills, fewer emergency engineering sprints to work around blocks, zero legal fees for scraping disputes, and stable data delivery month after month.